Liquid Glass in SwiftUI: Three Patterns From Shipping Return on iOS 26

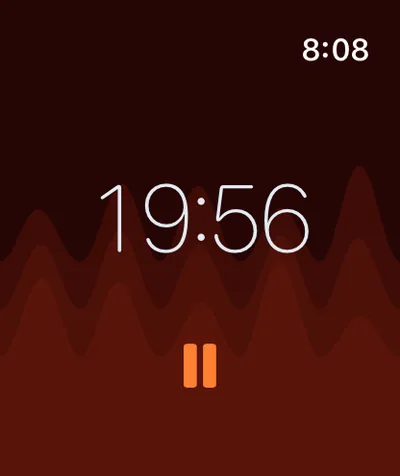

Apple’s Liquid Glass is a one-line SwiftUI API: .glassEffect().1 Return, my meditation timer, uses it nine times across iOS, macOS, and tvOS.2 One of those uses applies the modifier to a custom Shape that turns the timer numbers themselves into liquid glass, glyph by glyph.

The interesting question is what happens when you go beyond the one-liner. Apple’s Human Interface Guidelines lay out a strict layering rule: Liquid Glass belongs in the functional layer (controls, navigation, transient UI) and never in the content layer.3 Most of Return’s nine uses are textbook functional-layer applications: pickers, buttons, control strips, paused-state badges. The interesting uses are the three that bend the rules without breaking them.

This essay walks through three patterns I shipped, the rules they respect, the pitfalls that caught me, and the API surface I deliberately did not use.

TL;DR

- iOS 26 ships Liquid Glass as

.glassEffect(_:in:). Default variant is.regularand the default shape isCapsule.1 - Return uses three patterns that go beyond the one-liner: glass on a custom

Shape(timer text via Core Text glyph paths), the mirror pattern (reflection underneath via flipped + masked copy), and functional-layer HUD overlays. - Apple’s HIG rule: Liquid Glass for the functional layer, standard materials for the content layer.3

- I deliberately did not use

GlassEffectContainer. The morphing API has no use case in Return (no glass element animates into another), and I have not benchmarked the rendering-performance gap; this is an unmeasured trade-off, not a recommendation.1 - Pitfalls: glass on a flat background reads as flat; jitter-free digit rendering needs fixed-width cells; tvOS’s

HStackignores the layout-direction environment value; reduce-motion must be honored on the morph animation.

The One-Line API And The Layering Rule

Apple ships Liquid Glass with a small surface area, introduced as a major design pillar at WWDC 2025:113

Text("Hello, World!")

.font(.title)

.padding()

.glassEffect() // default: .regular variant, Capsule shape

Text("Hello, World!")

.glassEffect(in: .rect(cornerRadius: 16)) // custom shape

Text("Hello, World!")

.glassEffect(.regular.tint(.orange).interactive()) // tint + touch reactivity

Three knobs: variant (.regular or .clear), shape (any Shape), and a GlassEffectStyle chain (tint, interactive). That is the whole API for a single view. Multi-view rendering is handled by a separate GlassEffectContainer, which I will get to.

The HIG is tighter than the API. Apple’s Human Interface Guidelines define two layers in every iOS 26+ interface:3

- The content layer: the document, list, photo, or media a person is consuming. Use standard materials here (the existing

.regularMaterial,.thinMaterial, etc.). - The functional layer: controls, navigation, tab bars, sidebars, transient overlays. Use Liquid Glass here.

Apple’s specific instruction: “Don’t use Liquid Glass in the content layer. Liquid Glass works best when it provides a clear distinction between interactive elements and content, and including it in the content layer can result in unnecessary complexity and a confusing visual hierarchy.”3

The rule sounds restrictive until you map it onto a real app. Return is a meditation timer. Its content layer is the breathing imagery and the looped video that runs behind everything. Its functional layer is the duration picker, the start/pause/stop button stack, the secondary settings button row, and (on tvOS) the paused-state badge. Eight of Return’s nine glass usages are textbook functional-layer applications: three duration-picker variants for iOS and macOS code paths, the start/pause toggle, the stop button, the settings button row, the tvOS paused indicator, and one more transient control overlay.4

The ninth is the deliberate edge case (Liquid Glass on the timer numbers themselves), which the next section walks through.

Pattern 1: Glass On A Custom Shape

The timer numbers in Return are not text drawn over a glass background. The glass is the text. .glassEffect(.clear, in:) accepts any Shape,9 and a Shape is a Path-producing protocol.10 So the trick is: convert the timer string into a path of glyphs using Core Text,11 then pass that path-as-Shape to .glassEffect.56

import SwiftUI

@preconcurrency import CoreText

struct GlassTextShape: Shape {

let text: String

let font: CTFont

func path(in rect: CGRect) -> Path {

guard !text.isEmpty else { return Path() }

let combinedPath = CGMutablePath()

let attrString = NSAttributedString(string: text, attributes: [.font: font])

let line = CTLineCreateWithAttributedString(attrString)

guard let runs = CTLineGetGlyphRuns(line) as? [CTRun], !runs.isEmpty else {

return Path()

}

for run in runs {

let glyphCount = CTRunGetGlyphCount(run)

guard glyphCount > 0 else { continue }

var glyphs = [CGGlyph](repeating: 0, count: glyphCount)

var positions = [CGPoint](repeating: .zero, count: glyphCount)

let range = CFRange(location: 0, length: glyphCount)

CTRunGetGlyphs(run, range, &glyphs)

CTRunGetPositions(run, range, &positions)

for i in 0..<glyphCount {

guard let glyphPath = CTFontCreatePathForGlyph(font, glyphs[i], nil) else { continue }

let transform = CGAffineTransform(translationX: positions[i].x, y: positions[i].y)

combinedPath.addPath(glyphPath, transform: transform)

}

}

// Core Text y-axis is flipped vs SwiftUI; flip then re-bound and center.

var swiftPath = Path(combinedPath).applying(CGAffineTransform(scaleX: 1, y: -1))

let flippedBounds = swiftPath.boundingRect

let offsetX = rect.midX - flippedBounds.midX

let offsetY = rect.midY - flippedBounds.midY

return swiftPath.applying(CGAffineTransform(translationX: offsetX, y: offsetY))

}

}

Real production code from Return/Return/GlassTextShape.swift.5 The path(in:) function uses Core Text to lay out the string, walks each CTRun, extracts each glyph’s CGPath, and unions them into one CGMutablePath. The two non-obvious steps come after the union: Core Text’s coordinate system places the origin at the bottom-left, while SwiftUI’s Path puts it at the top-left, so the path has to be flipped via CGAffineTransform(scaleX: 1, y: -1). Then the flipped path’s boundingRect has negative y values, so a translation re-centers it inside the rect SwiftUI hands the Shape. Skip either transform and the glyphs render upside-down or off-screen.

Then the application is a single line:

Rectangle()

.fill(.clear)

.glassEffect(.clear, in: textShape)

.frame(width: cellWidth, height: cellHeight)

The clear Rectangle is a hit-target placeholder; the actual visual is whatever shape textShape produces. With a glyph-path shape, the Liquid Glass material fills only the glyph outlines. The result: each digit of the timer is a separate liquid-glass form, refracting whatever animation runs behind it.6

The HIG nuance. Apple’s stated rule is Liquid Glass for the functional layer, standard materials for the content layer, with one explicit exception: transient interactive controls in the content layer (sliders, toggles) can take Liquid Glass when activated.3 The timer numbers in Return are state display, not a control: they update once per second from Timer.publish(every: 1, ...) and have no tap gesture (the start/pause button beneath them is what toggles state). So putting Liquid Glass on them is a deliberate edge case, closer to “transient interactive control” by intent than by literal interactivity, since the numbers are the visual focal point users watch the entire session. I am bending the rule, not breaking it. A reviewer who reads the HIG strictly could argue this should be standard material; I argue the timer is a time-elapsed control surface in the same family as a progress indicator. Apple’s docs do not adjudicate the case directly.

Why custom Shape over Text + background. Text rendered above a glass background reads as text on glass. Text rendered as glass itself reads as a different visual category. The user perceives the numbers as functional foreground, specifically as a transient element that exists to be looked through, not at.

Pattern 2: The Mirror Pattern

Return shows a reflection of the timer beneath it, fading out. Real production code:6

VStack(spacing: 0) {

GlassTimerText(text: displayTime, fontSize: fontSize)

.accessibilityLabel("Time remaining: \(accessibleDescription)")

if showReflection {

GlassTimerText(text: displayTime, fontSize: fontSize)

.scaleEffect(x: 1, y: -1)

.mask(

LinearGradient(

stops: [

.init(color: .white.opacity(0.2), location: 0),

.init(color: .clear, location: 0.6)

],

startPoint: .top,

endPoint: .bottom

)

)

.offset(y: -8)

.accessibilityHidden(true)

}

}

Three transforms compose the mirror, all standard SwiftUI primitives:14

scaleEffect(x: 1, y: -1)flips the second copy upside-down..mask(LinearGradient(...))fades the reflection from 20% opacity at the top to fully transparent at 60% down..offset(y: -8)pulls the reflection up 8 points so it abuts the original instead of leaving a visible seam.

The .accessibilityHidden(true) modifier on the reflection is load-bearing. VoiceOver should not announce the mirrored time twice; the original’s accessibilityLabel and accessibilityAddTraits(.updatesFrequently) are already attached to the main GlassTimerText instance above, and the reflection is purely decorative.

Why this works with Liquid Glass specifically. The reflection inherits the glass material from GlassTimerText. Any background the original sits on (a breathing-circle gradient, a video, a tinted scene) refracts through both copies. The mirror does not need any glass-specific code; the glass material handles the refraction for free. The whole effect is three modifiers and a gradient.

The accessibility cost. Reduce-motion users still see the mirror, but the glass material’s animation between time updates is suppressed elsewhere via @Environment(\.accessibilityReduceMotion).7 The reflection itself is static; only the morph between digit transitions animates.

Pattern 3: Glass HUD Overlays For Transient Controls

The remaining eight glass usages in Return are textbook functional-layer applications.4 Each follows the same pattern:

durationPicker

.frame(height: 50)

.frame(maxWidth: 320)

.glassEffect()

.padding(.horizontal, 20)

.transition(.opacity.combined(with: .scale(scale: 0.95)))

The .transition(.opacity.combined(with: .scale(scale: 0.95))) is the load-bearing part. Liquid Glass on transient controls only feels right when the controls transit. A static glass HUD that sits permanently on screen reads as chrome. A glass HUD that fades+scales in when the user taps and back out when they look away reads as a momentary control surface.

Apple’s docs on glassEffect note this implicitly: the modifier “captures the content to send to the container to render” and “react[s] to touch and pointer interactions in real time.”1 The animation hooks are not in the API, but the rendering pipeline assumes glass elements move. Static glass elements miss that affordance.

Return uses the pattern for the duration picker (slides up when the user taps), the start/pause toggle button (always visible but scales on press), the stop button (only visible mid-session), the settings button row (a horizontal control strip beneath the duration picker), and the tvOS paused-state badge (only visible when a session is paused on Apple TV). All five contexts respect the HIG’s functional-layer rule.3

The GlassEffectContainer Question

Apple recommends GlassEffectContainer whenever an app uses .glassEffect() on multiple views, for two reasons: better rendering performance (glass effects are batched) and the ability to morph shapes into one another during transitions.1

I did not use it. The reasoning is application-specific, not a refutation of Apple’s guidance. Return has nine glass views, none of which need to morph between each other.46 The duration picker never animates into the start button. The timer text never animates into the settings button row. Each glass element is independent. The morphing API would have no use case to fire on, and the container’s spacing rules would constrain layouts that today need no coordination.

The rendering-performance argument I cannot fully refute without measurement. Apple’s docs warn that “too many” glass effects outside a container can degrade performance.1 Return’s nine views never share the screen at once (the duration picker only appears in the menu state, the stop button only when paused mid-session). At any given frame I count three or four glass elements visible, which has been smooth on every device I have tested across iOS, iPadOS, macOS, watchOS, and tvOS, but I have not run an instruments trace comparing container-wrapped against modifier-only. So the honest framing: Return skips GlassEffectContainer based on an observed-good user experience, not a measured performance equivalence.

The rule I drew from this: GlassEffectContainer is for apps where multiple glass elements are visible and animating simultaneously. Apple’s example is symbol-set rendering with glassEffectUnion(id:namespace:): four weather symbols that fluidly merge and split as one unit.1 That is a textbook use case. If a future Return feature needs glass elements to morph or share a container’s spacing rules, the container is the right tool to add then. For today’s app, I have not yet hit the case.

The Pitfalls That Caught Me

Three real bugs from production:

Glass digit jitter. SF Pro Rounded has variable-width digits in proportional rendering. As the timer counted down, the displayed string changed length, and the surrounding HStack reflowed every second, jittering the entire timer. The fix: fixed-width cells for each character. Each digit gets a cellWidth of fontSize * 0.6, each colon gets fontSize * 0.3, and the HStack becomes a stable grid.6

HStack(spacing: 0) {

ForEach(Array(text.enumerated()), id: \.offset) { _, char in

let isColon = char == ":"

let cellWidth = isColon ? colonCellWidth : digitCellWidth

GlassDigitCell(character: String(char), font: ctFont,

cellWidth: cellWidth, cellHeight: cellHeight)

}

}

The cells are not Apple-standard; they are a workaround for proportional-width rendering at small fixed font sizes. Apple’s SF Pro Rounded with .monospacedDigit() would solve the same problem on Text, but the modifier is not available on a custom Shape-based glass renderer. The fixed-cell layout is the substitute.

tvOS layout-direction override. The same GlassTimerText ran on iOS, iPadOS, macOS, and tvOS. On tvOS specifically, the HStack mirrored under a Right-to-Left language environment even though the iOS version respected the in-environment override. The fix: pin layout direction both via the environment value and via the explicit flipsForRightToLeftLayoutDirection(false) modifier, applied directly to the HStack of digit cells (the parent VStack separately applies the environment override so the reflection copy inherits it):6

HStack(spacing: 0) { ... }

.flipsForRightToLeftLayoutDirection(false)

.environment(\.layoutDirection, .leftToRight)

The reason: tvOS’s HStack appears to ignore the environment-level override in some versions, and flipsForRightToLeftLayoutDirection(false) is the explicit no-mirror contract that is more reliably honored.12 Belt and suspenders.

Reduce-motion on the digit morph. Liquid Glass animates morph transitions between displayed strings by default. Users with accessibilityReduceMotion enabled saw the morph as flickering. The fix:6

.animation(reduceMotion ? nil : .easeInOut(duration: 0.15), value: displayTime)

The animation modifier reads @Environment(\.accessibilityReduceMotion) and disables the transition entirely when reduce-motion is on. Apple’s accessibility guidance is explicit: any decorative animation must respect the user’s motion preference.7

When Not To Use Liquid Glass

Refusal is part of the design.

Don’t put Liquid Glass in the content layer. Apple’s HIG is explicit, and ignoring the rule produces a confusing hierarchy: the user cannot tell what is interactive and what is content.3 If a glass effect is decorating a list row or a photo card, the design is fighting the platform.

Don’t use glass over a flat background. Liquid Glass refracts what is behind it. If “what is behind it” is a single solid color, the refraction has nothing to bend, and the result reads as a flat tinted rectangle. Either put glass over varied content (a gradient, an image, a video) or do not use glass at all. Return’s timer screen runs theme-based cover imagery and looped video as the background through VideoBackgroundView,4 specifically so the glass elements above it always have texture to refract.

Be cautious about glass on high-frequency content. Glass material rendering is GPU-bound, and the default morph animation between glass shape changes is itself an animation. A timer that updates once per second is fine in my testing; a waveform or audio visualizer at 60 Hz is unproven and likely fights the morph animation. I have not benchmarked the upper bound; treat this as a heuristic, not a measured threshold. Apple’s docs do not publish one.

Don’t ship glass without testing reduce-motion. Every glass animation should be gated on accessibilityReduceMotion.7 The default morph between glass shapes is a kinetic effect, not just a fade.

What Liquid Glass Means For Apps Shipping On iOS 26+

The thesis is small. Liquid Glass is a one-line API only when the app already respects the HIG layering rule. A SwiftUI app that puts controls in the functional layer and content in the content layer can adopt Liquid Glass with .glassEffect() modifiers and feel native by default.

Apps that mix the two layers (controls inside list rows, navigation bars treated as content, decorative chrome on photo cards) will adopt Liquid Glass and feel wrong. The material is correct; the architecture under it is not.

The custom-Shape pattern (Pattern 1) extends the rule cleanly. Anything that is functionally a control can take Liquid Glass, even if it does not look like a “control” in the conventional sense. A timer is a control, a level meter is a control, a progress indicator is a control. Liquid Glass on each of those is on-spec.

Pair this post with my earlier write-ups on shipping the same app’s data layer through App Intents and through an MCP server. The visual layer is the third surface of the same stack: typed entities for system AI, file format for cross-LLM agents, and Liquid Glass for the human at the device.8

FAQ

Can I use .glassEffect() on non-iOS 26 platforms?

The .glassEffect() modifier is iOS 26+, iPadOS 26+, macOS 26+, watchOS 26+, tvOS 26+, visionOS 26+. Pre-26 platforms have .background(.regularMaterial) and similar, which produce frosted-glass effects but not the new Liquid Glass refraction.1

Does GlassEffectContainer change the visual?

Container-wrapped glass elements can blend their shapes together when their spacing rules cause overlap. Without a container, each .glassEffect() is independent. For apps where glass elements should fluidly merge during animation, GlassEffectContainer is the right tool. For apps where each glass element stays distinct, a container is overhead.1

Why not use Text directly with .foregroundStyle(.thinMaterial)?

thinMaterial is a standard material, not Liquid Glass. The visual is a frosted-glass overlay, not the refractive glass-with-light-bending effect of Liquid Glass.3 For text that should look like the new material specifically, .glassEffect(.clear, in: customShape) is the supported path.

How do I capture a Liquid Glass screenshot for marketing?

Glass effects are GPU-rendered at runtime, so screenshots are taken from the simulator or device with the effect already applied. Apple’s official Liquid Glass reference imagery comes from their HIG documentation pages and WWDC 2025 sessions.3

Does GlassTextShape work for arbitrary text or just digits?

Any string Core Text can lay out works. Return uses it for digits and a colon, but the same Shape works for letters, symbols, emoji (with the right font), or mixed strings. The performance is bounded by the glyph count; a long paragraph rendered as glass would be expensive, but a six-character timer is trivial.

Three patterns, one rule, and one API I deliberately skipped. Liquid Glass is the third surface of an iOS 26+ app, sitting on top of typed entities and shared file formats. The one-line API is real. The HIG rule under it is what makes the one-line work.

References

-

Apple Developer, “Applying Liquid Glass to custom views”. Documentation for the

glassEffect(_:in:)modifier,GlassEffectContainer,glassEffectUnion(id:namespace:),glassEffectID(_:in:), andGlassEffectTransition. Default variant.regular, default shapeCapsule. ↩↩↩↩↩↩↩↩↩↩ -

Author’s Return, a meditation-timer app published on the App Store on April 21, 2026, available for iPhone, iPad, Mac, Apple Watch, and Apple TV. Uses SwiftUI, SwiftData, and HealthKit on iOS 26+ / macOS 26+. ↩

-

Apple Developer, “Materials” Human Interface Guidelines. Defines the functional vs content layer rule for Liquid Glass: “Don’t use Liquid Glass in the content layer.” Lists the regular and clear variants and their intended uses. ↩↩↩↩↩↩↩↩↩

-

Production code in

Return/Return/ContentView.swift(seven.glassEffect()call sites),Return/Return/GlassTimerText.swift(one call site onGlassDigitCell), andReturn/ReturnTV/TVContentView.swift(one call site on the tvOS “Paused” indicator). Total nine. PlusReturn/Return/VideoBackgroundView.swift, which renders the theme-based cover imagery and looped video that the glass elements refract through. ↩↩↩↩ -

Production code in

Return/Return/GlassTextShape.swift. TheShape-conforming wrapper around Core Text. Created November 26, 2025, included in shipped App Store v1.0. ↩↩ -

Production code in

Return/Return/GlassTimerText.swift.GlassDigitCell,GlassTimerText, andGlassTimerDisplayviews. Implements fixed-width cell layout, the mirror reflection, and reduce-motion gating. ↩↩↩↩↩↩↩ -

Apple Developer, “accessibilityReduceMotion” environment value. Apps must honor the user’s motion preference; default morph animations on Liquid Glass should be gated on the value. ↩↩↩

-

Author’s analysis in App Intents Are Apple’s New API to Your App and Two Agent Ecosystems, One Shopping List. The three-surface model: App Intents for Apple Intelligence, MCP for cross-LLM agents, Liquid Glass for the human at the device. ↩

-

Apple Developer, “glassEffect(_:in:isEnabled:)” on

View. Thein:parameter accepts anyShape-conforming type. The default shape isCapsule. ↩ -

Apple Developer, “Shape” protocol. A

Shapeis any type that produces aPathfor a given rectangle. Custom shapes can wrap arbitraryCGPathdata. ↩ -

Apple Developer, “Core Text Programming Guide” and

CTLineCreateWithAttributedString. Core Text is the lower-level text engine used to lay out attributed strings into glyph runs and extract per-glyph paths. ↩ -

Apple Developer, “flipsForRightToLeftLayoutDirection(_:)”. Explicitly overrides RTL mirroring on a

Viewregardless of the surrounding\.layoutDirectionenvironment value. ↩ -

Apple, “WWDC 2025 Highlights” via Apple Newsroom. Liquid Glass announced as the unifying design material across iOS 26, iPadOS 26, macOS 26, watchOS 26, tvOS 26, and visionOS 26. Sessions: “Meet Liquid Glass” (WWDC 2025), “Build a SwiftUI app with Liquid Glass”. ↩

-

Apple Developer, “LinearGradient”, “scaleEffect(x:y:anchor:)”, “mask(_:)”. Standard SwiftUI primitives, all available since iOS 13. ↩